100+ Years Software's Evolution: From Punch Cards to AI

Around 30 million people working in software engineering today. Hundreds of apps and websites are created every minute. How did we get to this point? Let's take a journey back.

It always amazes me to look at the massive scale and fast pace of software development today. As of 2024, the number of people working in software engineering worldwide is estimated to be around 30 million. Compared to 50 years ago, there were only around 100,000 to 200,000 software developers worldwide. Software become important and important in our daily lives. In our generation, it is hard to imagine living a day without interacting with software.

I want to take you on a journey, to trace back history, to see where we're coming from, and to learn how software has evolved up to this day. We’ll go through important milestones that ship-shape the software development.

Are you ready? Let the journey begin…

Hardwired Computers and Punch Cards

Considered the "father of the computer", Charles Babbage conceptualized and invented the first mechanical computer in the early 19th century. Computers were started from mechanical, then electro-mechanical, then fully electronic in the 1940s. The computers in the earliest days were literally a “machine”, they were huge, with wires and knobs everywhere, and often filling the entire room.

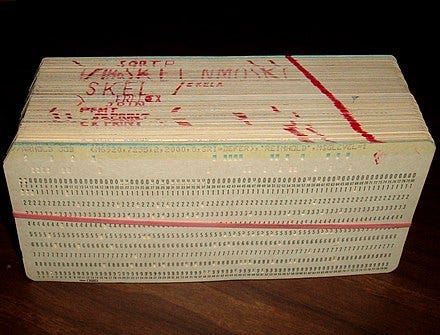

Back in the early 1900s, the software was not like anything that you could think of. The software was something tangible, we can hold it in our hands. To perform specific tasks, for example, calculating accounting data, the “hardware” computer engineer would input the instructions through a card reader with punch cards. Punch cards were stiff paper cards with holes punched into them in specific patterns. Each row of holes on the card could represent a single operation or piece of data, typically in binary form. The concept of “software” didn’t even exist yet. Back in the day, the profession “engineer”, meant hardware engineers, not programmers, or even software engineers.

Stored-program Concept

In the late 1940s, one groundbreaking idea known as the Stored-Program Concept is introduced. This concept, introduced by a mathematician and physicist, John von Neumann, allowed instructions (programs) to be stored in a computer’s memory alongside the data. This meant that instructions, like the steps to perform a calculation, could be treated just like any other piece of data. So instead of having to physically alter the machine to change its task, the computer could just read different instructions from memory and execute them. This means computer with fewer wires. Also this concept allows the computer to scale its capability, the bigger the memory, the more capable the computer could have.

This breakthrough idea laid the foundation for modern software. The stored-program computer was the first major step in the creation of what we call software today.

Once the stored-program concept took root, the next challenge was figuring out how to write those instructions. The first "software" was written in machine language, a low-level code made entirely of binary (0s and 1s). Imagine trying to instruct a computer to add two numbers using only ones and zeros—it was extremely tedious.

The Birth of Compiler

But in 1952, an American computer scientist, Grace Hopper made a breakthrough that changed everything. She developed the first-ever compiler, a tool that could translate human-readable code into machine language.

Hopper’s compiler made programming faster, easier, and accessible to a broader range of people. This meant programmers could write code in a more human-understandable and natural way, and the compiler would handle the translation into the binary machine code instructions the computer could execute.

Following the invention of compilers, it opened the way to the development of high-level programming languages.

The Rise of High-level Languages

In the early days of computing (1940s–early 1950s), programmers worked with machine language (binary code like 10101011) or assembly language (like ADD or SUB), which was a symbolic representation of machine code. Both were closely tied to the hardware, meaning the programmer had to understand the specifics of the machine to write programs.

Thanks to compiler, programmers can now write more human-readable code, make more accessible and faster. IBM’s FORTRAN (1957), LISP (1958), COBOL (1959). They were the first widely adopted program languages. COBOL became incredibly popular in business and government applications, particularly for tasks like payroll, inventory management, and record-keeping.

These languages allowed programmers to focus on solving problems rather than worrying about the complexity of hardware specifications. By the end of the 1950s, high-level languages had become widely accepted across industries. Universities started teaching these languages to students, businesses adopted them for practical applications.

The Recognition of the “Software Engineer” Role

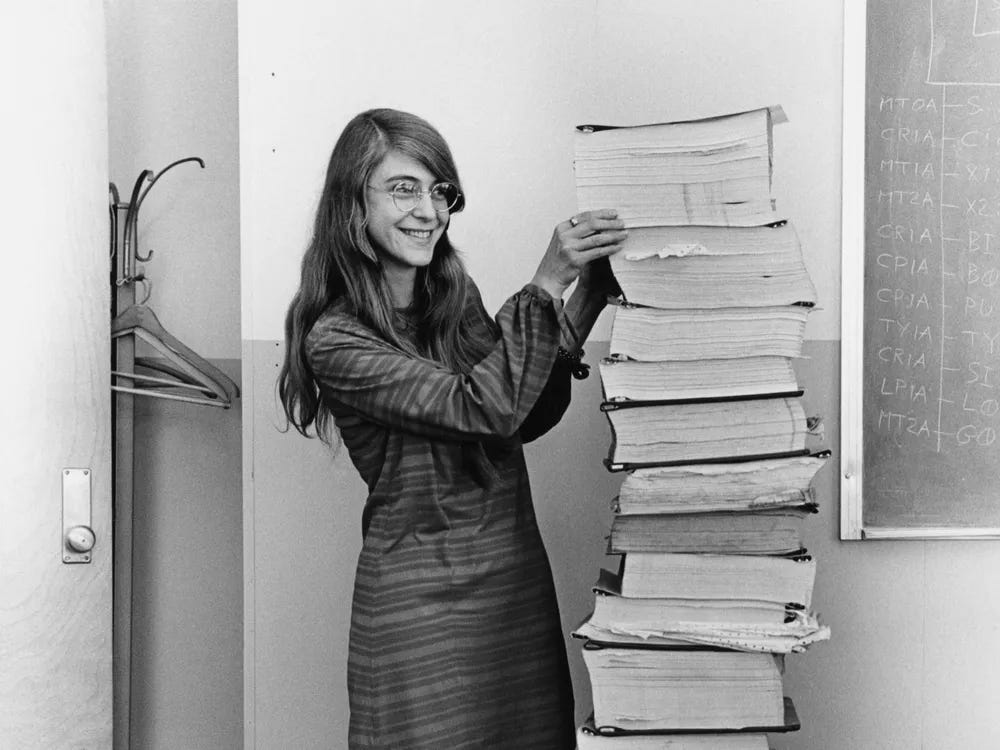

Margaret Hamilton, a computer scientist and systems engineer, made history in the 1960s. She came up with the term "software engineering" while working on NASA’s Apollo space missions. She led the NASA software team that landed astronauts on the moon. What a cool job!

Hamilton believed that people who write computer programs should be called engineers, just like those who design bridges or machines. This idea helped give more respect and recognition to the work of creating software.

Since then, people could proudly call themselves “software engineers”.

The “Software Crisis”

The “software crisis” happened in the 1960s and 1970s. During this time, people needed more and more software. But developers and companies couldn't make good software fast enough, often had problems, didn't work well, and was hard to fix or improve. Even for big companies, these issues were also applied.

The main cause of this crisis is the lack of good development methodologies. Developers often wrote code without formal planning, testing, or documentation. This lack of structure led to unmanageable and buggy software, especially in larger projects.

Another issue was about the programming tools. There was no integrated development environment (IDE) like what we have now. Back in those days software development tools were rudimentary.

The software crisis was a turning point in software development and led to significant breakthroughs. In the following years, software engineers started to gather to seek for resolution.

Software engineering did become a new formal discipline, which aimed to bring engineering principles (such as planning, designing, testing, and quality assurance) into software development. Programming languages like Pascal and later C were developed with structured programming in mind.

Waterfall development methodologies were developed in 1970 by Winston W. Royce to introduce the six stages of making complex software: requirements analysis, design, implementation, verification, integration, and maintenance. The concept of Object-oriented Programming was birth, introduced by Alan Kay.

Better tools were also emerging to support the software development such as IDEs, Version Controls, and debugging tools. Many believe that many believe Microsoft’s Visual Basic (VB) was actually the first real IDE, launched in 1991.

The Trend of Personal Computing

Before the 80s, computers were primarily used by large institutions, governments, or businesses. But then with the advancement of hardware and software, PC become trending. Computer development is increasingly focused on catering to the needs of the individual user like typing, drawing, and calculating things that are more personal. We recognized pioneers like Steve Wozniak, Steve Jobs, and Bill Gates, as they were successful in bringing the PC to every home.

More regular people got a chance to put their hands on the smaller commercial computers in their homes. As the impact, more people become interested in developing software that could be run on their PC. Desktop apps and games were trending in that era.

In the early 2000s, the personal computer underwent another major transformation, with the introduction of laptops and tablets. These portable devices made it possible for people to take their computers with them on the go, and they also made it possible for people to use their computers in new and different ways.

The Internet Era

The rise of the internet in the 1990s transformed the software world yet again. Suddenly, the focus shifted to web-based applications, and new languages like HTML, JavaScript, and CSS emerged. This allowed developers to create software that could be accessed from anywhere, as long as there was an internet connection.

With the internet, the separation of client and server software become popular. This laid the foundation for modern web applications. On the server side, languages like PHP and Java started to emerge.

The biggest impact of the internet was that it enabled information/knowledge sharing. Engineers around the world now could learn from each other, and push the software development process even faster.

The open-source movement also shaped the world of software development. This concept of openly sharing source code started with projects like Linux in the early 1990s. Linux was an operating system created by developers all over the world, working together to build something that everyone could use for free. Popular open-source projects like Python, Node.js, and Git are fundamental tools for modern developers.

Also, we cannot forget about Internet of Things (IoT). In 1999, Kevin Ashton coined the term "Internet of Things”, a system of interconnected devices that communicate via the internet. With hardware sensors and the internet, the hardware could send data to be used by another party. Programming languages to work with hardware sensors like C, Java, and Python became essential to create software.

The Mobile Computers

Fast forward to the 2000s, computer chips become smaller and smaller. It could be embedded in smaller mobile devices, like phones and tablets. Software demands on these devices have become massive. It is called apps.

Mobile apps have become trending. Every day people try new apps and bring them everywhere thanks to the mobility capability. Mobile apps that utilized capabilities like GPS, cameras, and the internet, increased the efficiency and productivity of how humans do their everyday work and hobby.

If you ask, how many apps are in Apple Store and Google Play combined? Together, there are roughly 4.4 million apps. Here where they said, “…there is an for that”.

The AI Break

Human continues discovery in the field of Artificial Intelligence has been a long journey back to the 1940s-50s. It is a long story. In the early seventies, the capabilities of AI programs were limited. There was not enough computer memory or processing speed. But as the computer hardware, chips, and memory grew fast, combined with the internet and open data, AI finally caught up with the momentum.

Suddenly, here we are, in 2024, everyone talked about AI. AI could understand human language. GPT-3 from OpenAI demonstrating impressive natural language generation capabilities, has captured the public imagination. Suddenly you can do things faster and efficiently with the help of AI.

Now you can make software with AI without directly typing the programming code. This is another level of “abstraction”. We can just talk or prompt what we want, and the AI will generate the code for you in any programming language you ask.

In this era, softwares are produced rapidly because the process becomes easier and easier, as the AI handles the hard part.

And here we are… back in the present. Although the timelines above are just glimpses of some key milestones, hope they give you some sort of clue how we have come up so far until this day.

My takeaways…

Software evolution is about the evolution of code “abstraction”. Software code evolved from low-level code into more natural language code. We might get to the level where we code using voice commands without touching the keyboard. Thanks to all the pioneers that opened the way for us. We are indeed standing on the shoulders of giants.

Computers become more and more about the software. We even won’t see the physical of a computer anymore. With the internet, cloud, and AI, the software is more about the content on a screen.

Hardware and software are affecting each other. Advances in hardware, such as faster processors, increased memory, and improved graphics, create opportunities for software developers to create more powerful and sophisticated programs that take advantage of those hardware enhancements. Inspire with what software can do, can also push the hardware to innovate more.

What remains constant is that software always be used to solve problems, in that era. Simple problems require simple software. As we advance, humans are faced with complex problems, leading to the requirement for complex software. People in the past might not be able to solve our future problems. It is our turn to answer our challenges in our time.

Also, the problems that software tackles are shifting from big corporate problems into more personal problems. There is always room for great software to solve a problem in somebody’s life.

As software developers, we’re in an era of “anything is almost possible”. We have evolved so far. Now we can create anything faster. We can learn anything faster.

Like and share the article if you find it inspiring. Until the next article.